-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-txt-www.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

Robots.txt and SEO: You Need to Know

Robots.txt and SEO: You Need to Know

-

Robots.txt - The SEOptimer

Robots.txt - The SEOptimer

-

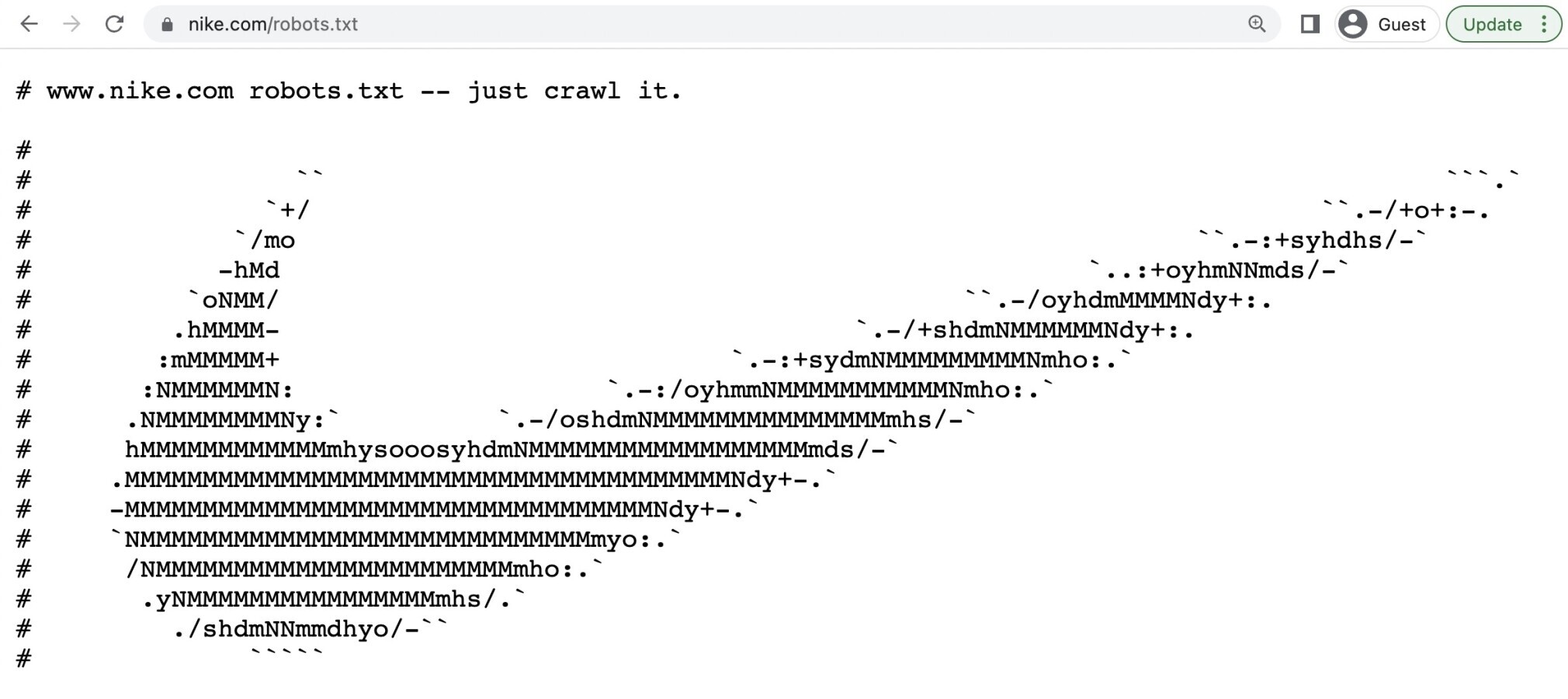

A Guide Robots.txt - Everything SEOs Need Know - Lumar

A Guide Robots.txt - Everything SEOs Need Know - Lumar

-

What Is A Robots.txt File? Best Practices For Robot.txt

What Is A Robots.txt File? Best Practices For Robot.txt

-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-txt-https-security.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

keys to building a Robots.txt that works - Oncrawl's

keys to building a Robots.txt that works - Oncrawl's

-

Robots.txt: Ultimate Guide for SEO Examples)

Robots.txt: Ultimate Guide for SEO Examples)

-

keys to building a Robots.txt that works - Oncrawl's

keys to building a Robots.txt that works - Oncrawl's

-

Robots.txt and SEO: You Need to Know

Robots.txt and SEO: You Need to Know

-

Best Practices for Up Meta Robots Tags &

Best Practices for Up Meta Robots Tags &

-

What Is A Robots.txt File? Best Practices For Robot.txt

What Is A Robots.txt File? Best Practices For Robot.txt

-

Robots.txt: Ultimate Guide for SEO Examples)

Robots.txt: Ultimate Guide for SEO Examples)

-

Merj | Robots.txt: Committing to Disallow

Merj | Robots.txt: Committing to Disallow

-

How To robots.txt to Block Subdomain

How To robots.txt to Block Subdomain

-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-gsc-tester-www.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-gsc-fetch-block.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

Complete Guide to Robots.txt Why It Matters

Complete Guide to Robots.txt Why It Matters

-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-txt-redirect.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

Common Mistakes and How to Avoid

Common Mistakes and How to Avoid

-

What Is & What Can You Do ) | Mangools

What Is & What Can You Do ) | Mangools

-

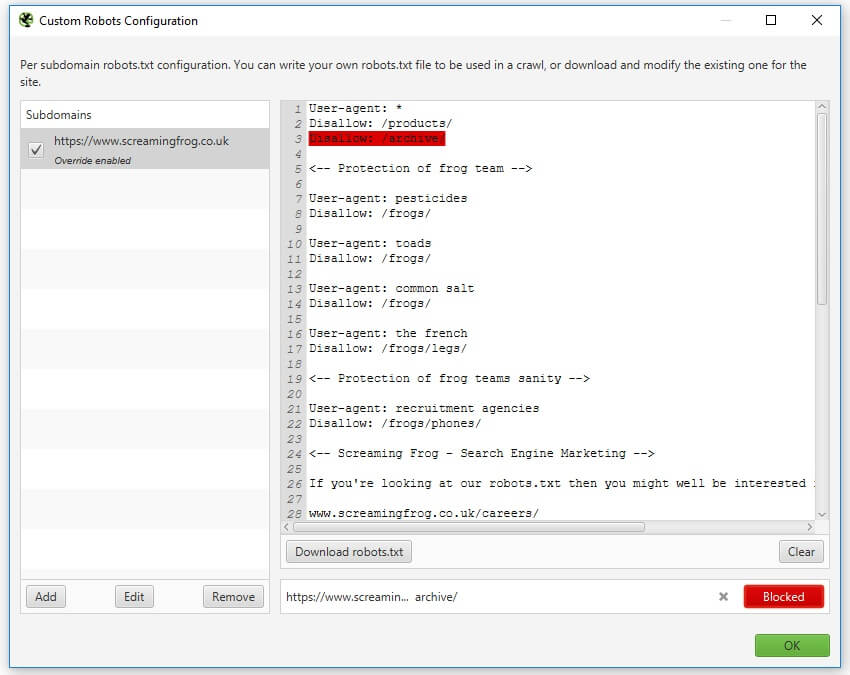

Robots.txt Testing Screaming Frog

Robots.txt Testing Screaming Frog

-

Complete Guide to Robots.txt Why It Matters

Complete Guide to Robots.txt Why It Matters

-

![Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study] Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]](https://searchengineland.com/wp-content/seloads/2020/04/robots-communicate-small.jpg) Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

Mixed Directives: that robots.txt files are handled by subdomain and protocol, www/non-www and http/https [Case Study]

-

A Guide Robots.txt - Everything SEOs Need Know - Lumar

A Guide Robots.txt - Everything SEOs Need Know - Lumar